Digital Colour & Filters

When we say that a sensor photosite captures photons, we actually mean to say that the photosite is capturing the intensity of photons. More photons mean that the pixel is brighter, while less photons will give a darker image. At the end of the day, all the photosites do is measure white, black and a multitude of grays in between. So how do we get colours? We get them by passing the light through various filters. The most commonly used filter in digital cameras is the Bayer filter. The Bayer filter is based upon the principles of human vision so lets start off by examining how the human eye interprets the visible colour spectrum.

The human visual sensory organ is the eye, and it functions much like a camera does. The target image is passed as light through the lens of the eye and is focused on the back of the eye, or retina, where numerous photosensitive receptors interpret the varying wavelengths of light. The human eye has two types of receptors that enable vision: cones that interpret colour hues and rods that interpret shades of grey. The cones are classified into three different types that are responsive to particular bands of light: for a generalization these can be labeled red (long wavelengths), green (medium), and blue (short). All colours are perceived from varying intensities of photons stimulating none, some or all three of the cones simultaneously. No stimulation of the cones gives us black, while equal stimulation of all three cones gives us white, with all other combinations of stimulation provides us with the visible colour spectrum we see. The rods on the other hand are utilized in low light situations and provide us with night vision.

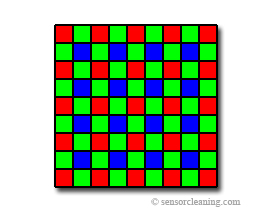

Bayer Filter

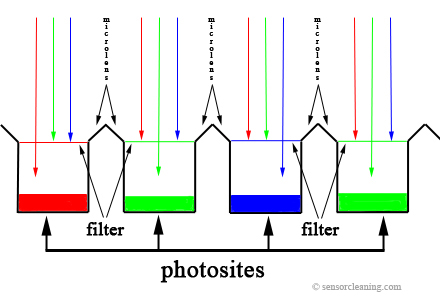

A Bayer filter, named for its inventor Dr. Bryce E. Bayer of Eastman Kodak, refers to the arrangement of independent colour filters over a photosensor array that is used to capture a colour image. The filter mimics the human eye, filtering only RGB (red, green, blue respectively), having twice as many green filters, then blue or red. The reason for the doubling of green filters is to compensate for the sensitivity of human vision to green in the optical spectrum. The filter causes each photosite to register photon intensities for one of the three colours and once the full image has been captured it is digitized into RAW output which, with most cameras these days, can be saved as a file or further processed to create a colour image file (a JPEG or TIFF). Because each photosite captures information for one color, information from the surrounding pixels is used in a demosaicing algorithm to interpolate the actual color of the pixel produced. There are various software algorithms that produce varying degrees of reproduction, and it is usually noted that using software on your computer, such as Photoshop, renders a better image than that from your cameras firmware.

|

|

|

|

Unfortunately, because there are calculations from an incomplete set of data, errors do arise in the reproduction of the image. These most notably are colour aliasing and moiré. Colour aliasing occurs when the light from an object does not register on all three color sensors and, in the reproduction, errors are made that are usually apparent as rainbow patterns within the fine detail regions of the image. Moiré also effects fine detail within images near the resolution limit, but manifests itself in a different way. Instead of a rainbow effect, unreal maze like structures are created although color artifacts may also be noted.

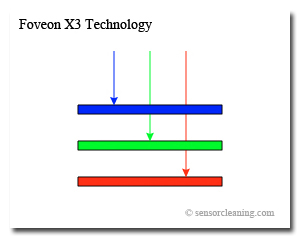

Foveon X3 Filter

The X3 sensor, developed and produced by Foveon Inc. in tandem with their silicon foundry partner Dongbu Electronics, introduces a whole new technology in how digital imaging sensors filter light. Instead of having a photosite that is filtered to except only one of three primary colours as in the Bayer filter method, X3 uses three layered sensors over each photosite that accumulates data for all three primary colours. Since all colour information for each pixel is recorded, no demosaicing is needed to interpolate the colours, leading to truer to real colours and less image artifacts like those produced by aliasing and moiré. Also X3 sensors capture more photons per pixel because none are filtered as they are in a Bayer filter, lending to better image quality. Although the X3 technology has been around since 2002, it has been slow in adoption, having only found a home in five camera products (Sigma SD9, SD10, Polaroid x530, and Hanvision HVDUO-5M, HVDUO-10M) and with two on the horizon (Sigma SD14 and DP1), as of this writing. Some minor issues associated with the X3 technology are the pixel ambiguity and low level light problems. The pixel ambiguity comes from the rating, in megapixels, that Sigma gives to its SD10 camera. It considers it a 10.2 megapixel camera but only produces images with a resolution of 2268 x 1512. This is the resolution associated with a 3.4 megapixel camera. The reason for this is that Sigma is counting all the pixels per layer (3.4mp Red, 3.4mp Green, 3.4 Blue) to give a grand total of 10.2mp. As for the low lighting problem, it has been noted by many professional users to suffer in high ISO.